AI Workshops for Non-Technical Teams: What We Learned

Lessons from teaching AI to faculty, business students, and teams with zero technical background. The gap isn't intelligence — it's exposure.

We started running AI workshops because we kept hearing the same thing from clients: "My team doesn't get AI." After enough discovery calls where founders and department heads described teams that were either afraid of AI or thought it was limited to drafting emails, we decided to do something about it.

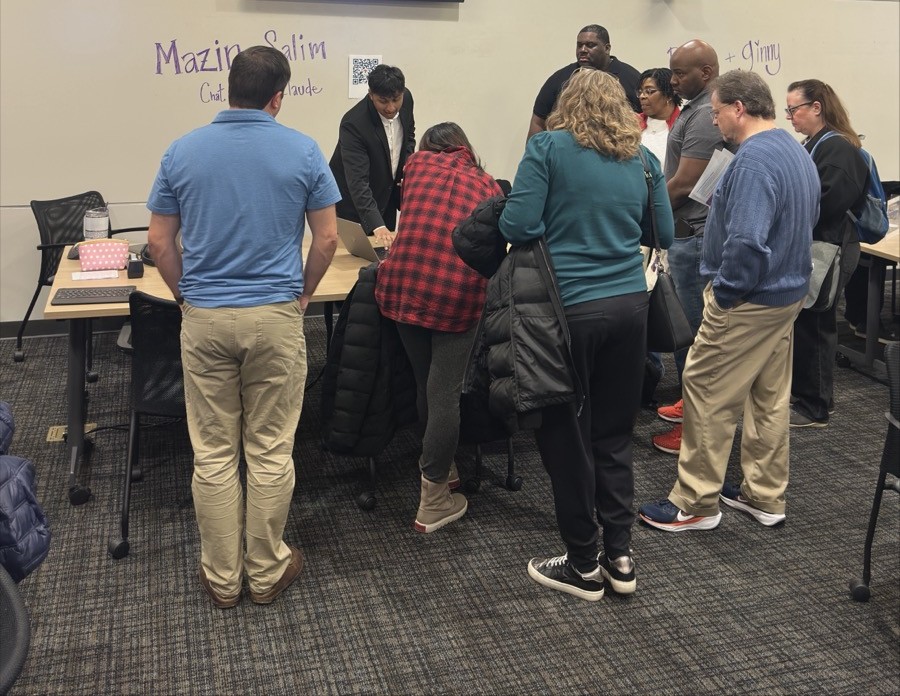

Our co-founder Mazin, who works as an AI engineer at Auburn University, ran a series of workshops for faculty, staff, and business students between November 2024 and January 2025. Three workshops, three very different audiences, one consistent finding: the gap between people who use AI effectively and people who don't isn't intelligence. It's exposure.

Most teams we work with have smart, capable people. They've just never seen what AI can actually do when you give it a real problem and a well-structured prompt. Their mental model is stuck somewhere around "write me an email" and "summarize this article." That's not a skills problem. It's an exposure problem. And exposure is something you can fix in an afternoon.

The Perfect Prompt Formula

The first thing we teach in every workshop is the Perfect Prompt Formula. It sounds simple, and it is. That's the point. Most people interact with AI tools the way they'd type into a Google search bar: short, vague, hoping the system figures out what they want. The formula gives them a framework that immediately produces better results.

Context + Specific Information + Goal + Format = a good prompt.

Here's what each piece means:

Context is who you are and what situation you're in. "I'm a supply chain manager at a manufacturing company" gives the AI a lens to interpret everything that follows.

Specific Information is the details that make the prompt yours, not generic. The data, the constraints, the background the AI wouldn't know otherwise.

Goal is what you actually want. Not "help me with this" but "identify the three facilities with the highest late delivery rates and explain what's driving the pattern."

Format is how you want the output structured. A bullet list, a table, a two-paragraph summary, a Python script. Telling the AI the shape of the answer you need cuts out most of the back-and-forth.

We walked Auburn faculty through this in our first workshop, "AI Without the Overwhelm," a three-session series in November 2024. The audience was university faculty and staff, most of whom had either never used ChatGPT or had tried it once and been underwhelmed. We covered the basics of both ChatGPT and Claude, but the prompt formula was the thing that shifted the room. You could see it happen in real time: someone who'd been getting vague, generic outputs suddenly got a response that was specific, structured, and actually useful.

One faculty member had been trying to get AI to help draft a grant proposal for weeks. She'd been typing things like "write a grant proposal about educational technology." During the workshop, she restructured it: she gave the AI her role, her department's research focus, the specific funding agency's priorities, the goal of producing a compelling project summary, and the format the agency required. The output went from boilerplate to something she could actually work with. Same tool, same person, same goal. The only difference was the prompt.

From Emails to Analytics

The workshop that changed how we think about AI education was a guest lecture Mazin delivered in January 2025 for Dr. Anthony Roath's supply chain management class at Auburn's Harbert College of Business.

These were business students. Smart, motivated, but not technical. When we asked the room how many had used AI for anything beyond writing or summarizing, almost no one raised a hand. Most had never heard of Claude Code. Their assumption was that AI was a text tool: you type words in, you get words out.

We built a case study around a real-world problem. Fedrigoni, an Italian paper and packaging company, had a late delivery rate of 17.4% across their operations, costing them an estimated 573,000 euros annually. We gave the students a dataset of 5,000 orders and asked a simple question: can you figure out why deliveries are late and predict which ones will be late in the future?

Then we handed them Claude Code.

Within the session, students who had never written a line of code were using AI to analyze 5,000 rows of order data, identify patterns in late deliveries, and build a predictive model that achieved 78% accuracy in predicting which orders would arrive late.

The findings were real and actionable. Students discovered that Art & Design Papers had late delivery rates three times worse than Wine Labels, despite being produced by the same company. The Sao Paulo facility was running at a 21.9% late delivery rate compared to Verona's 12.7%. Certain product-facility combinations were driving a disproportionate share of the problem.

None of this required students to understand Python, statistics, or machine learning. They described what they wanted to analyze in plain English. Claude Code wrote the scripts, ran the analysis, generated the visualizations, and presented the results. The students' job was to ask the right questions and interpret the business implications — which is exactly what supply chain managers are trained to do.

We open-sourced all the materials from that session at aub.ie/supplychainguide so other educators and teams can use them.

The jump from "AI writes emails" to "AI builds predictive models from my data" happened in a single class period. That's not because the students suddenly became technical. It's because someone showed them what was possible and gave them a structured way to interact with the tool.

What Surprised Us

We went into these workshops expecting to teach people how to use AI. What we actually learned was more interesting.

People's mental models of AI are roughly two years out of date. In December 2024, we helped run a station at the TIGER Tech Leaders "AI Open House" at Auburn, co-sponsored by the Biggio Center and Women in Technology. We guided faculty, staff, and tech leaders through setting up and using ChatGPT and Claude. The most common reaction wasn't confusion. It was surprise. "It can do that?" People who thought they understood what AI could do were working from a model based on whatever they'd seen in a news article in 2023. The tools had moved significantly past their expectations.

The "aha" moment is always the same. Across all three workshops — the beginner faculty sessions, the tech leader open house, and the supply chain lecture — the breakthrough moment was identical. It happened when someone gave AI a problem they actually cared about and got back something useful. Not a demo. Not a toy example. Their data, their question, their workflow. That's when the shift happened.

Resistance isn't about fear of AI. It's about not seeing relevance. The faculty members who were most skeptical at the start of "AI Without the Overwhelm" weren't afraid of AI replacing them. They just didn't see how it connected to their work. Once they used the prompt formula on a real task — drafting assessment rubrics, analyzing student feedback, outlining curriculum changes — the skepticism evaporated. They weren't anti-AI. They were anti-hype. And honestly, that's a reasonable position.

Non-technical teams often ask better questions than technical ones. This was the biggest surprise. The supply chain students didn't ask "can you run a logistic regression on the late delivery flag?" They asked things like "why are Art & Design Papers always late?" and "is the Sao Paulo warehouse the problem or is it the product mix they handle?" Those are better questions. They're rooted in business understanding. The AI handled the technical translation. The humans handled the thinking.

Three Principles for Teaching AI to Non-Technical Teams

Based on these workshops and our ongoing work with client teams, here's what we've learned about getting non-technical people productive with AI.

1. Make it hands-on from the first ten minutes

Slides about AI capabilities don't change behavior. Having someone open Claude, type in a prompt, and see the result — that changes behavior. In every workshop, we moved to hands-on prompting within the first ten minutes. The concept-to-practice gap needs to be as short as possible. People learn AI by using AI, not by hearing about it.

2. Use their real problems, not generic demos

The supply chain case study worked because it was a real supply chain problem given to supply chain students. The faculty workshops worked because we had people prompt about their actual courses, their actual research, their actual administrative headaches. Generic demos ("let's have AI write a poem!") create a novelty response that fades in a day. Real problems create habits that stick.

3. Start with the prompt formula, always

Every audience we've worked with — from university faculty to business students to corporate teams — benefits from the same starting point. The Perfect Prompt Formula gives people a mental model for interacting with AI that immediately produces better results. It works because it's simple enough to remember, flexible enough to apply to any use case, and concrete enough to make a measurable difference on the first try. Context, specific information, goal, format. That's it. Once someone internalizes that structure, they stop writing bad prompts forever.

We Do This for Businesses Too

These workshops started at Auburn University, but the lessons apply everywhere. We've seen the same patterns in every organization we work with: capable teams, outdated mental models of AI, and a massive gap between what people think the tools can do and what they actually can do.

At MM Intelligence, AI training is part of how we work with clients. When we build an AI system for a business — a chatbot, an analytics pipeline, an automation workflow — we don't just hand over the deliverable and walk away. We make sure the team that's going to use it actually understands how to use it. That includes structured workshops tailored to your team's actual workflows, hands-on sessions with your real data, and the frameworks that make people self-sufficient with AI tools long after we're gone.

If your team is underusing AI, or not using it at all, the fix is usually simpler than you think. It's not a six-month training program. It's exposure to what's actually possible, a structured way to interact with the tools, and practice on problems that matter to them.

We'd be happy to talk about what that looks like for your team. Get in touch and we'll walk through it.